Did a Scientific Study Make Major Depression Disappear?

Only if you believe a boatload of authors waving magic wands and chanting mystical voodoo incantations

I credit a Substack article by Awab Aftab for calling my attention to a preprint that warrants a series of Substack articles. The preprint is an excellent teaching example of what is wrong with current practices by which authors conduct scientific research and publish their interpretation of results in the safety of a strongly supportive inbred group that guards against the robust critique and independent pre- and post-publication review of its members’ papers. In contrast. my readers are self-selected for skepticism and their interest in honing their skills for critical appraisal of evidence. Many will conclude that I am doing a public service, even if the authors of this pre-print are not enthusiastic about being in the spotlight. Hopefully, when my series of Substack articles winds down, these authors will begrudgingly agree that I have provided them a valuable incentive to acknowledge past mistakes and do things differently. That is how robust criticism is supposed to work in America.

Awab’s Substack article is DSM Disorders Disappear in Statistical Clustering of Psychiatric Symptoms: Whither Major Depression?

The open-access preprint is

“Reconstructing Psychopathology: A data-driven reorganization of the symptoms in DSM-5”

Awab Aftab’s Substack article is written in a distinctive three-part format that quickly points readers where to find certain things.

The first part (Act 1) is typical for Awab. He heaps lavish and unwarranted praise on a paper that can later prove embarrassing to him and the authors. In this case, we are told about

a brilliantly designed and innovative study of the quantitative structure of psychopathology with important ramifications for our understanding of psychiatric classification. No one has conducted a study like this before, and the results are remarkable. It takes place in the context of the development of Hierarchical Taxonomy of Psychopathology (HiTOP) which is a dimensional, hierarchical, and quantitative approach to the classification of mental disorders, and relies on identification of patterns of covariation among symptoms.

The Second Part (Act 2) describes the method and results with mostly accurate details that sound innocuous to an untrained reader. The problem is in what Awab omits.

With the hindsight of reading the pre-print, I found the sampling strategy described in the pre-print for conducting the online survey worrisome. The authors collected survey data from a large sample that will prove difficult to interpret because of its unexamined differences among the smaller convenient samples from which the larger sample was arbitrarily assembled. I would anticipate troubles for anyone making sense of the results. Responses were collected from an unknown number of pregnant women who were experiencing morning sickness and postpartum women who did not feel like going dancing or doing other things that they enjoyed before giving birth. If they were queried (which they were not), they would report they were sleepless from tending to a colicky newborn or housebound because they could not find a babysitter with whom they feel safe to entrust the welfare of their precious newborn. Other responses were collected from anonymous subjects who indicated that an unnamed someone had told them at some point in their lives that they were clinically depressed or had bipolar disorder---or a borderline personality disorder (We don’t know if the accuser was an ex-lover or a board-certified psychiatrist, which probably matters). Other responses were collected from middle-aged men who were in various stages of substance misuse, remission, and relapse. Last but certainly not least, in being problematic, responses were obtained from college undergrads who were given course credit for completing the survey, a different incentive than with recruitment of other samples.

The choice of response key for the items that subjects ticked off was alarming, not just worrisome.

“Participants reported how true each symptom statement was for them in the past 12 months on a five-point scale from Not at all true (Never) to Perfectly true (Always). Participants were told to think about their experiences across a wide variety of contexts.”

Consider peripartum women. In responding, they must somehow accommodate their experiences before they were pregnant. The middle-aged men must think back to the time that they relapsed—when they began crying again in their beer because a girlfriend or boyfriend dumped them at varying times of the year. Consider the college students who must ponder how much weight to give to how they felt during spring break, or after they passed or failed exams, or after they got drunk and had sex to which they would not agree when sober. That does happen. There are endless possibilities for differences in subjects' assumptions in completing the survey that will generate a lot of uncontrollable noise.

The authors relied on impressively sounding statistical analyses to sort this all out. I will save for another time what many other experts and I think of the authors’ reliance on principal component analyses and exploratory factor analysis. Surely, my nerdy readers committed to improving the trustworthiness of the psychological literate can appreciate the enormous freedom the authors had interpreting preliminary results and deciding what to do next.

The authors labeled a “Big Everything” at the pinnacle of their “hierarchical taxonomy.” Some of us appreciate this is a bright and shiny “new and improved” rebranding of what superbly articulate genius Paul Meehl termed the “crud factor” and that another gang of experienced researchers called the “big mess.”

Hotel Prinsenhof (Source: Wikipedia)

Some of us spent years grappling with the problem and emerged having to confess defeat. I was privileged to deliver a speech about this in the historical Hotel Prinsenhof in Groningen on a panel honoring Professor Hans Ormal. Hans had concluded from his life work that inventories constructed from self-report negatively worded brief survey items (aka neuroticism) constituted an uninformative risk marker (bad) rather than a genuine risk factor (good) for future mental and physical health problems. I knew very senior Sir David Goldberg was in the audience. He and I could gather a small group after my talk who would drink a lot of Calvados and poke fun at what the crud factor did to researchers who tackled it. Sir David could give accounts of having succumbed to the big mess in research conducted in primary care trying to distinguish between subjective reports of depressed mood and demoralization versus pain and fatigue. A version of my presentation is preserved on SlideShare with an apt title: Negative emotions and health: Why do we keep stalking bears when we only find scat in the woods?

A helpful guide to distinguishing a bear from scat.

Aftab’s Part 3 (Act 3, Denouement) delivers a more sober assessment of the article than he offered at the outset, invoking a quote from the preprint. He says:

There are some important limitations to note with regard to the Forbes et al. survey. It relies only on self-reported symptoms, and features requiring clinician observation are missing; symptoms are decontextualized (e.g., insomnia due to substance withdrawal isn’t differentiated from insomnia due to anxiety); all symptoms were assessed using a 12-month time scale, even though different symptom patterns exist at different time scales.

Forbes et al. themselves note,

“It will also be essential to understand which aspects of these results—particularly the fine-grained levels of the structure—are robust to other approaches to measurement (i.e., using alternative measures, time frames, multi-method or multi-informant approaches, as well as within-person assessment) and across intersectional conceptualizations of identity (e.g., in a variety of sociodemographic and culturally and linguistically diverse samples).”

Forbes et al. do not dwell on the preprint on their failure to show robustness in their split sample attempted replication.

These authors must be familiar with Hans‐Ulrich Wittchen and Katja Beesdo-Baum's scathing assessment of HiTOP: “Using new words for old ones might increase the risk that already established research findings lack consideration in the future.”

“HiTOP provides little specific guidance towards our ultimate goal, namely, a classification of mental disorders based on causal factors and mechanisms involved in the first development of psychopathology and its progression over time. Its inherent weakness remains the overemphasis on cross-sectional psychopathology and the neglect of dynamic developmental pathways and differential diagnostic issues relevant to treatment and management.”

Expect me to expand on this assessment in future Substack articles. I also will invoke John Ioannidis’s explanation of why enthusiasm for another variation of negative affectivity, the Type D Personality Scale, persisted for so long. Claims about the validity of the Type D Personality Scale depended on a statistical trick that inevitably yielded spurious results. An inbred small team of enthusiasts managed to keep null findings out of the literature. Then Dutch psychologist Niels de Voogd and I piled on two negative prospective studies, the largest ever decisively negative RCT, and a meta-analysis. Ioannidis commented that scientific inbreeding and same-team efforts at replication allow

some types of seemingly successful replication [to] foster a spurious notion of increased credibility, if they are performed by the same team and propagate or extend the same errors made by the original discoveries. Besides same-team replication, replication by other teams may also succumb to inbreeding, if it cannot fiercely maintain its independence. These patterns include obedient replication and obliged replication.

Consider this Substack article my opening argument, as if we are an investigatory commission. The authors and others are welcome to offer rebuttals, and I will assume the burden of providing evidence and elaboration for what I only have space for making claims here.

During the discussion, clinicians will have a chance for valuable input challenging the clinical relevance of the opinions expressed in the pre-print.

It is time for open discussion and robust critique of the HiTOP movement. I welcome opportunities to give presentations before live audiences, in person, or remotely. Some HiTOP proponents, including a senior author of the pre-print, have been ruthless and hateful in discouraging disagreement. Let’s say “No!” to that kind of antisocial behavior.

A plea for paid subscriptions and other contributions.

I have been harassed since 2017 by a Dutch psychologist who is a co-author on other papers with the first author of this pre-print. He became upset when a senior psychiatrist and I would not praise his work involving counting adjectives and calculating the number of permutations and combinations. The result was that he could make the diagnosis of melancholic depression disappear, with some patients who met the criteria endorsing entirely non-overlapping sets of items. The senior psychiatrist was Holocaust survivor Bernard “Barney” Carroll. Barney, who was nearing his final days and was considered a world expert on diagnosing melancholic depression after inventing and validating the dexamethasone suppression test. Barney was united with me in responding to antipsychiatrists in the UK who considered biological psychiatrists to be Nazis, including Barney. When Barney passed, the UK group attacked my heavily accessed blog post on the Adverse Childhood Experiences (ACEs) Checklist with the same antisemitic trope.

As predictable, harassment from this unholy alliance has been renewed with the mere announcement that I would write this article. The pile-on has started. If the past is any guide, initial responses will be rather silly, and then the doxxing and harassment of my family will occur. Then, maybe a vague call to find me and “take me out.”

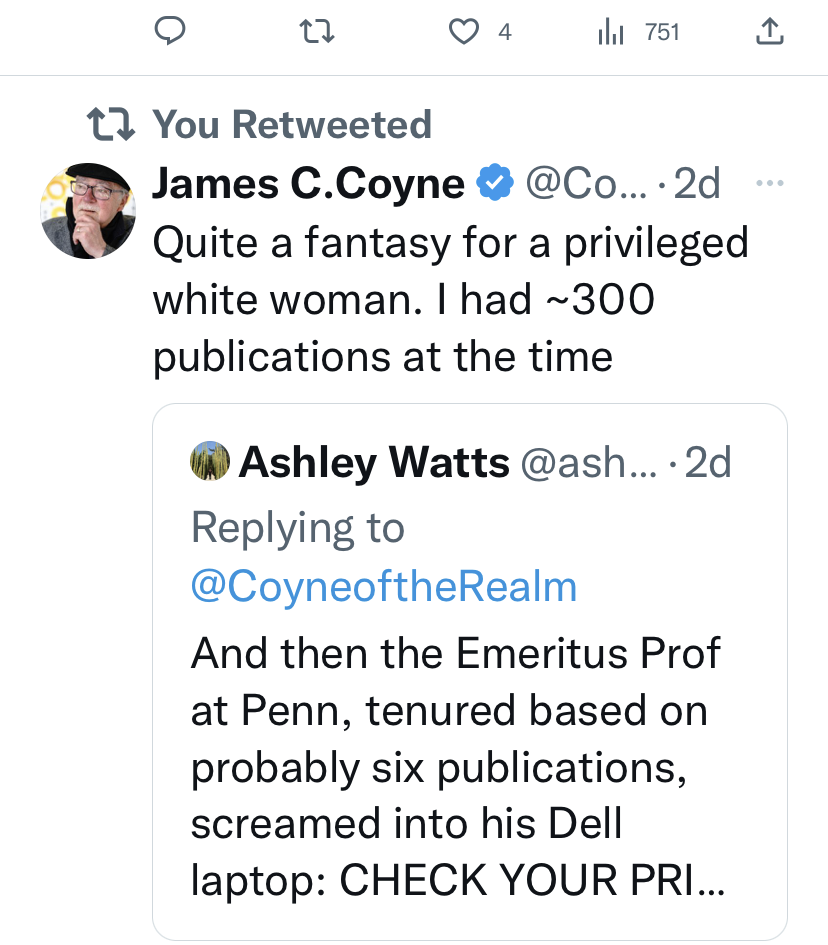

I invite any author of the pre-print to respond. However, any criticism is considered violence, and criticism of an article with a white woman as the first author is violence against women. With twisted logic, the mean girls and their friends have poured onto the middle school playground.

Recently, I was de-platformed and prevented from an invited writing workshop in Portugal because of the intervention of a German leftist who cited Peter Kinderman’s criticism of me. I was given no opportunity to respond in what will become an international issue. More recently, there are renewed efforts to drive me from social life with renewed attacks whenever I criticize anyone.

I will announce other ways to support me. Until then, please consider purchasing or gifting a paid subscription or contacting me via Substack about how you can contribute in other ways.

Started reading and immediately felt embarrassed that I hadn’t noticed the screaming flaws you so eloquently pointed out. I guess I thought, probably like very many others, that this was fairly solid data strongly demonstrating that clusters of symptoms were so heterogeneous that we needed to bring into question current diagnostic practices. But the big, glaring problem that you’ve pointed out is that the raw data can’t really be regarded as data at all. The randomness of the “results” is what one would expect when the input is so meaningless. We’ve got to get better training to researchers, and more skilled peer review generally to prevent this ostensibly clever bunk making it to publication and wreaking havoc.